A quick note on blog timing: I posted this for publication about two weeks before Matterport and Hexagon announced their partnership to allow for the use of the BLK360 in the Matterport Capture app. So, there is a reason why I don’t mention it in the article! However, I will say that I am excited about the prospects of opening up the Matterport Cloud to additional collection devices. I was already using the Pro2 with other scanners during my testing and found it to be quite useful for in-house uses; allowing the clients to see this as well should really help them grow into the AEC disciplines. Unfortunately, we’ve not heard any specific timeline for this integration (other than sometime in 2018) so I’m not able to actually test it out at this time. Without further ado, let’s look at what you can do with the system today.

Thanks to Matterport, I was able to play with one of their new Pro 2 cameras for a few weeks this summer. As a longtime user of traditional static laser scanners, I have not yet made the jump into low-cost scanning, though it’s an ever-growing market. Low-cost collection hardware is definitely hot at the moment, with companies like FARO & Leica joining the game alongside several newer manufacturers. I think we all realize that, for the price of these devices, we will make trade-offs versus traditional scanning devices. I took it upon myself to find those limitations with the Pro2.

For those unfamiliar, Matterport came out of the real estate industry. As a result, image quality, ease of use, and automated features are the top priorities—accuracy is not. That’s not to say that it is crudely inaccurate. The data from a single setup/scan is good. Where you get into trouble is the aggregation of error in the registration of multiple setups. If you are looking for hardware for a Scan-to-BIM solution this is probably not the unit for you. If you want data for a virtual walkthrough, online advertising, quick floor plans, or VR, the Pro2 is well worth a look.

To be perfectly honest, every issue I found fell into one of three categories: lack of accuracy, limited setup distances, and/or lack of processing controls. But first, let’s look at what the Matterport Pro2 is designed to do.

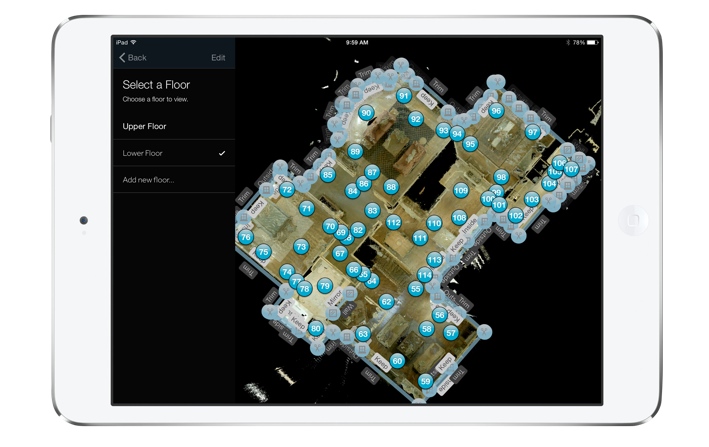

A floorplan view in Matterport’s Capture iPad app

Field experience

Field data collection requires the Pro 2, a tripod, and an iPad (here are the compatible models). That’s it. No targets, external batteries, or additional paperwork. Each scan takes less than 90 seconds—and yes, I busted my 10K steps early in the day pirouetting around the camera to stay out of the pictures! However, each position needs to have a lot of overlap with the adjoining scan to make up for the lack of targets. Matterport suggests spacing scans 5-8 ft (1.5-2.5m) apart. I pushed it a bit, but met with mixed results (environmentally dependent). In the end, I decided to pace off 8 ft for simplicity.

Imaging requires the use of the Matterport Capture App (iOS only), with a connection to the Pro 2’s internal Wi-Fi network. Users can see each scan in a 2D plan view as they are aligned. While imaging, you can mark features in the plan view that may cause registration errors (such as windows, mirrors, etc.).

My best advice when you’re doing field collection is to put your tripod on wheels if you can (lots of set-ups = sore forearm). Also, get the best iPad you can. I always killed the iPad battery before the Pro2’s, and, since all the data is stored on the iPad, memory is a precious commodity.

The registration algorithm uses both spatial data as well as RGB data for alignment. This means that lighting and movement in the capture area can kill your productivity. I had a couple of instances in which the change in lighting conditions caused an alignment error (or lack of initial alignment). It’s best to stay away from populated areas and sunlight if possible. I also found that the initial alignment on the iPad seemed to take a bit longer if people were walking through my scene.

All in all, the capture experience was quite painless (excepting my forearm). As with any line-of-sight instrument, environmental conditions caused great variance in the amount of area that I could capture in a given time. However, in what I consider to be optimum conditions, I was pushing 7000 ft² (650 m²) per hour.

One of the biggest transitions from traditional laser scanning is adjusting your mind to avoid “big” open areas that call to those of us used to scanners with ranges in the hundreds of meters. At first, you really do feel like you’re over-scanning, but after a few days you develop the sense of how to best divide up an area.

Matterport runs on desktops, mobile systems, and VR headsets.

Office experience

As I mentioned, automation is a hallmark of the system, and the processing shows that best. Final alignment is simply a matter of connecting the iPad to a WiFi network with internet access and hitting the upload button in the Capture app. When your data is ready, you receive an email with a link to the space’s location on the Matterport Cloud. The largest area I captured was about 25,000 ft² and it was uploaded, processed, and ready to view in about 10 hours.

The Matterport Cloud is where you do all your data editing and sharing. It is Software as a Service (SaaS) and they have myriad pricing options available. As Matterport is targeting the AEC space, my understanding is that they are pushing the envelope on service options to meet the needs of AEC users, who require a bit more customization than real estate users.

Traditionally, I have been anti SaaS. However, it makes more sense in lower-cost, lower-accuracy settings, where I envision this being used by a lot of people that may not have access to traditional CAD applications.

The downside, of course, is that you have zero control over the data processing. If you have errors in the registration, you have to call tech support (which was very quick to respond) and hope they can fix it. This is where an iPad with a large screen can help you find those errors while still in the field. When something goes wrong, you can delete a single position and try again from a better location.

After you upload the data, it becomes a bit more difficult. You can download the data as an OBJ file (mesh) from Matterport Cloud and convert that to a point cloud, divide, and then realign your data in order to repair registration errors, but that’s your only other option. In fact, you can’t download data from a single position either–it’s all or nothing—which I’m not too crazy about. I totally understand that this is not the way they want the Pro2 to be used, and that I’m probably not their core demographic, so I’m not expecting this to change anytime soon. Same goes for registration or alignment diagnostics. Other than spot-checking, you are not provided with any metrics regarding the accuracy of the alignment of all of the positions.

The Matterport Cloud interface is very interesting, though. Essentially, you can look at your data in a “Showcase” or interact with your data in the “Workshop.” Both offer several cool viewing options such as floor plan, dollhouse, inside (similar to Google StreetView), and Virtual Reality (VR). The VR data is compatible with Google Cardboard and Samsung VR.

The Workshop interface offers tools like measure, label, take a snapshot, create videos, export images (including 360° panos), and add “Mattertags.” Mattertags are essentially hyperlinks that you can place in any location within the data you choose. All the Workshop data can be published to the Showcase (except measurements) in order to make it publicly available. The Cloud interface can be shared with Collaborators and the Showcase can be shared via weblink or embedded in a website.

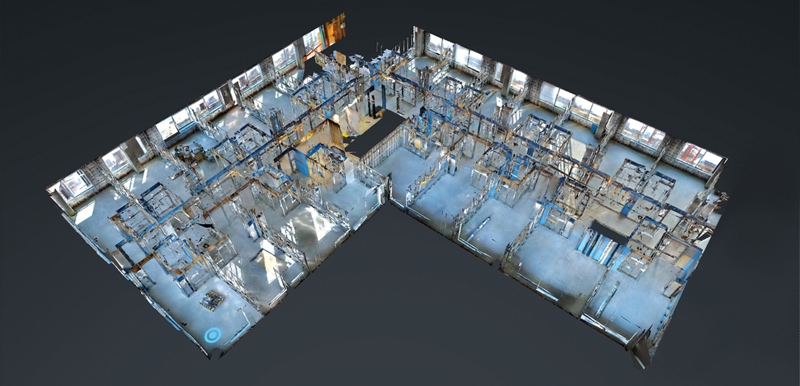

A Matterport dollhouse view of a construction project in progress

Moving Forward with AEC

As I mentioned, Matterport is looking at the AEC disciplines as a growth market. To that end, they are tailoring their product for the larger spaces and commercial applications needed in the AEC market.

They informed me that they will support the use of April tags in the next couple of months. These are to be used as supplemental targets/constraints in areas of limited geometrics variation, like long hallways. Currently, a single space is restricted to about 50,000 ft², but size limitations will be gone in the future. On the VR side, Oculus Rift and HTC Vibe support are on their roadmap and WebVR for Android should be out in the next month or so.

Will it replace laser scanning?

So, is the Pro2 going to replace laser scanners? I don’t think so. However, the ease of use, low cost per ft² of data capture, and great imagery had every one of my in-house modelers asking for it to be used in conjunction with a survey grade device. In fact, my contacts at Matterport said that they see laser scanners in the data submitted to Matterport Cloud all of the time. When it comes to ease of use on the client side, the Pro 2 excels as well. The photorealistic look and StreetView-style viewer combine to make it easy to use and very familiar feeling, which is a great trick when you’re presenting something new.

I think we’ve all been in a meeting where what we supplied was overkill and as a result, cost prohibitive. Those are the meetings that the Pro 2 was made for.

(Last but not least many thanks to Sibyl Chen, Tomar Poran, and Daniel Prochazka from Matterport for answering my numerous emails full of questions. I’m quite sure it was more fun for me than you!)