PatentlyApple.com is a must-read web site if you care about technology development, following closely and well the various patents that Apple applies for and is granted. And Apple is currently the 35th largest company in the United States, and when they develop new technology, it’s maybe a good idea to pay attention. When they get a patent for a method of 3D data capture and display? Well, seems like we’d want to take a look at that, right?

I’ve written about Apple and 3D before, when they won a patent for 3D display, that had everyone buzzing about holograms from the iPhone. But this is different:

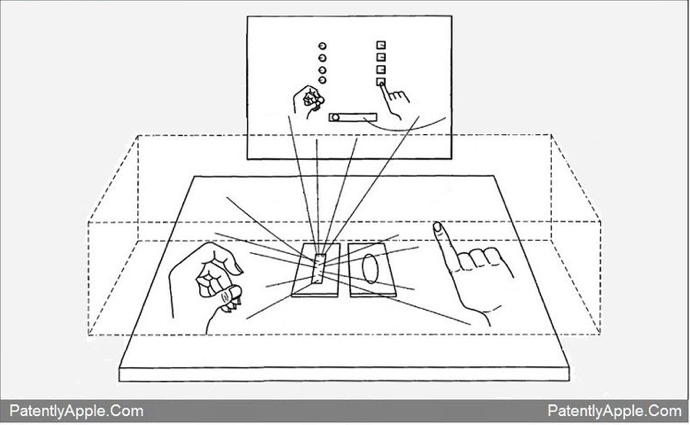

Apple’s patent covers a wild 3D system that could generate an invisible space in front of the user that could allow them to work with holographic images or project their hands onto a screen in front of them to manipulate switches or move pieces of virtual paper or parts of a presentation.

Essentially, the new iOS device, likely an iteration of the iPhone or iPad, would create a volume in which it would capture 3D data, and then use that information to feedback to a display system, which would allow a fully interactive 3D experience. Sure, you can imagine all the applications for gaming and play, but what about serious applications? Training comes quickly to mind. You could push the actual buttons on that actual machine that you’re going to operate. You could be holding a scalpel and actually cut the artery you’re repairing. You could play the piano keyboard with the same finger movements.

Of course, there’s the matter of weight and gravity and inertia and all that. It’s different pushing an immaterial button floating in the air than it is pushing a button on a real dashboard. But it’s getting pretty close to real, and it’s portable and scalable.

It’s also interesting to see how Apple is actually intending to go about accomplishing this. As PatentlyApple rightly notes, time of flight laser scanning in this case would probably be impractical:

Equipment costs and complexity are correspondingly high, making TOF unattractive for ordinary consumer applications.

You can say that again. I’m guessing Apple doesn’t want to throw a $10,000 scanner in each iPhone any time soon.

PatentlyApple also outlines some issues with white light, etc. What Apple is proposing is this:

User input is optically detected in an imaging volume by measuring the path length of an amplitude modulated scanning beam as a function of the phase shift thereof.

How they do that cheaply is unclear. I’d encourage you to read the full article for details that PatentlyApple explains and there’s little point in me repeating/paraphrasing.

Regardless, this is indication that we’ll see more and more inexpensive 3D data applications coming into the market in the somewhat near future. The applications are still being developed and it behooves you to be on the lookout for technology that you could bring into your workflows.

As for my workflow? Yeah, I’d like the virtual keyboard just fine (sorry about the ad – it’s a popular video…):