It’s hard to overstate the importance of Microsoft’s Kinect sensors to the growth of 3D capture technology at large. Originally an XBOX gaming interface, the Kinect time of flight system was quickly adopted by DIY tech heads and industrial technologists alike, seeing use in artistic, medical, industrial, and robotics applications, among a whole slew of others. The fun ended last October when Microsoft stopped manufacturing the device.

However, as the company’s CEO Satya Nadella told the gathered crowd at Microsoft’s Build keynote today, the company has continued developing the sensing tech for its HoloLens devices. In fact, that tech has advanced enough that Microsoft is going to package it up as “Project Kinect for Azure.”

Introducing Project Kinect for Azure, the most powerful sensor kit with spatial human and object understanding. #MSBuild pic.twitter.com/k1UxoGhtXs

— Microsoft (@Microsoft) May 7, 2018

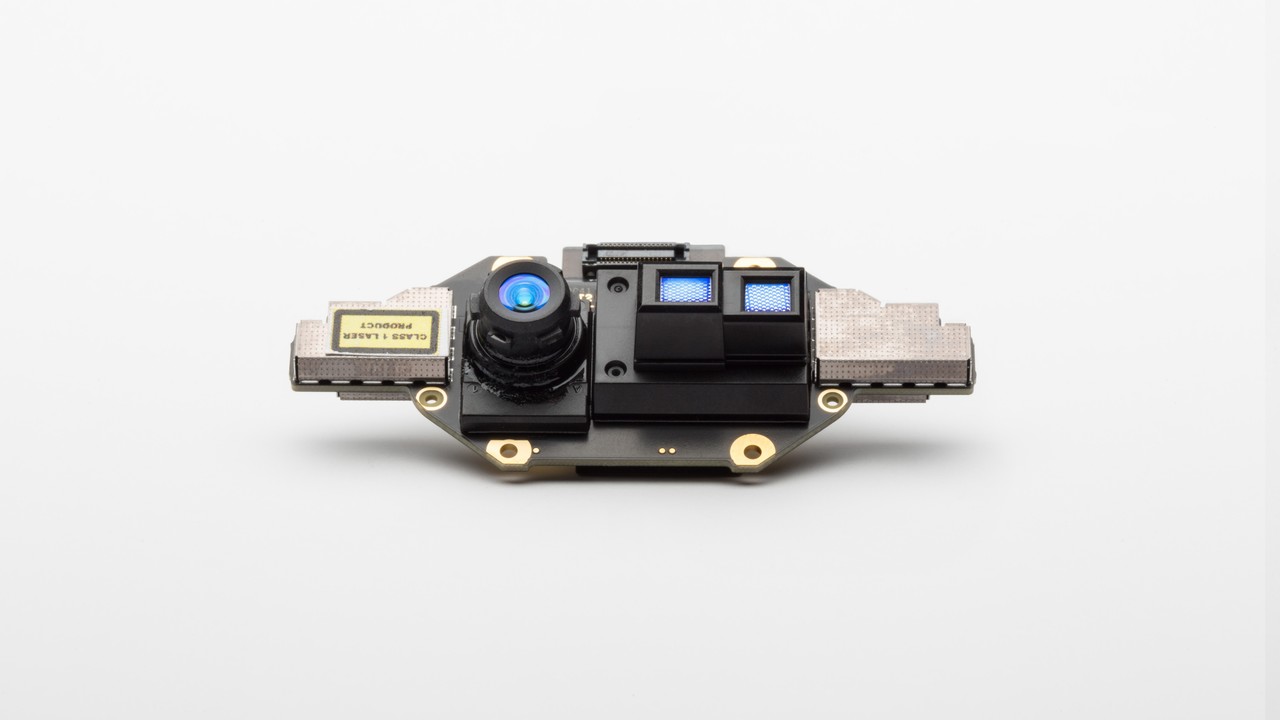

The company is publicizing it as an AI-enabled sensor kit. Hardware-wise, that descriptor leaves the Kinect’s form factor and specs wide open. Promotional images show a device with a wide-lens camera, a “Megapixel Sensor” (one megapixel?) and “dual lasers for close and far range.”

In a LinkedIn post, Kinect creator Alex Kipman went deeper. “What Satya described,” Kipman writes, “is a key advance in the evolution of the intelligent edge; the ability for devices to perceive the people, places and things around them.”

The new Kinect sensor kits offer two specific benefits for intelligent edge devices. One, the kits will apply Microsoft’s latest ToF tech, allowing devices to “ascertain greater precision with less power consumption.” Two, the sensor kits will gather depth data to feed its AI. This depth data is a more efficient way to do deep learning, resulting in “dramatically smaller networks needed for the same quality outcome,” and a lower cost of operation for your intelligent edge devices.

It remains to be seen if the new Kinect will be as important as the original, but it’s certainly off to an interesting start.