Scandy Pro is a 3D scanning app that exploits the TrueDepth active 3D sensors in Apple’s iPhone X to enable accurate 3D capture. But Scandy, the company that makes it, has a lot more in its portfolio. They also make a build-your-own augmented reality app called Cappy AR, and even offer an SDK called Scandy Core that lets you build 3D capture, SLAM, object tracking, and point-cloud QA tools into your mobile app with a trivial amount of code and limited expertise.

Scandy characterizes itself less as an app maker, and more as a provider of “smart, sensor-agnostic middleware,” and if you look closer at its solutions, they show a company exploring the full potential of 3D capture on a mobile device. When I spoke to co-founders Charles Carriere and Cole Wiley, the demo they gave me—a real-time volumetric video streaming solution that looks like a futuristic video chat—shows that they might just be able to do it.

Scandy Pro: A step towards pro-level scanning on a mobile device

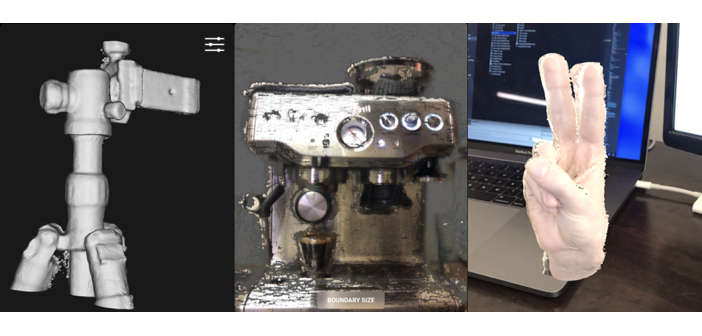

After releasing their first app for the TrueDepth sensor, Cappy AR, in early 2018, Scandy launched Scandy Pro in June. The app exploits the same core technology you’ll find in Cappy AR and the Scandy Core SDK, but with an interface designed to make 3D capture as easy as possible for novice. That means a workflow that gets you scans in “two taps (start scan, stop scan).” It also offers a number of features including export into STL, OBJ, or POI, and in-app editing tools like cropping, and decimation. Carriere says the color is per-vertex for now, but true texture mapping is coming soon.

Free users can create an unlimited number of 3D scans, but are limited to saving one per day. For a subscription price of $2 a week, $6 a month, or $49 for the year, users get unlimited saves. Though Scandy Pro was only released in June, Carriere tells me that it has been in constant development, receiving more than 25 updates in the past 6 months. For instance, the app now offers the capability for native upload to SketchFab.

Scanning with a forward-facing sensor

That’s all well and good (you’re probably saying), but how useful is a 3D-scanning app that uses a sensor pointed at my face?

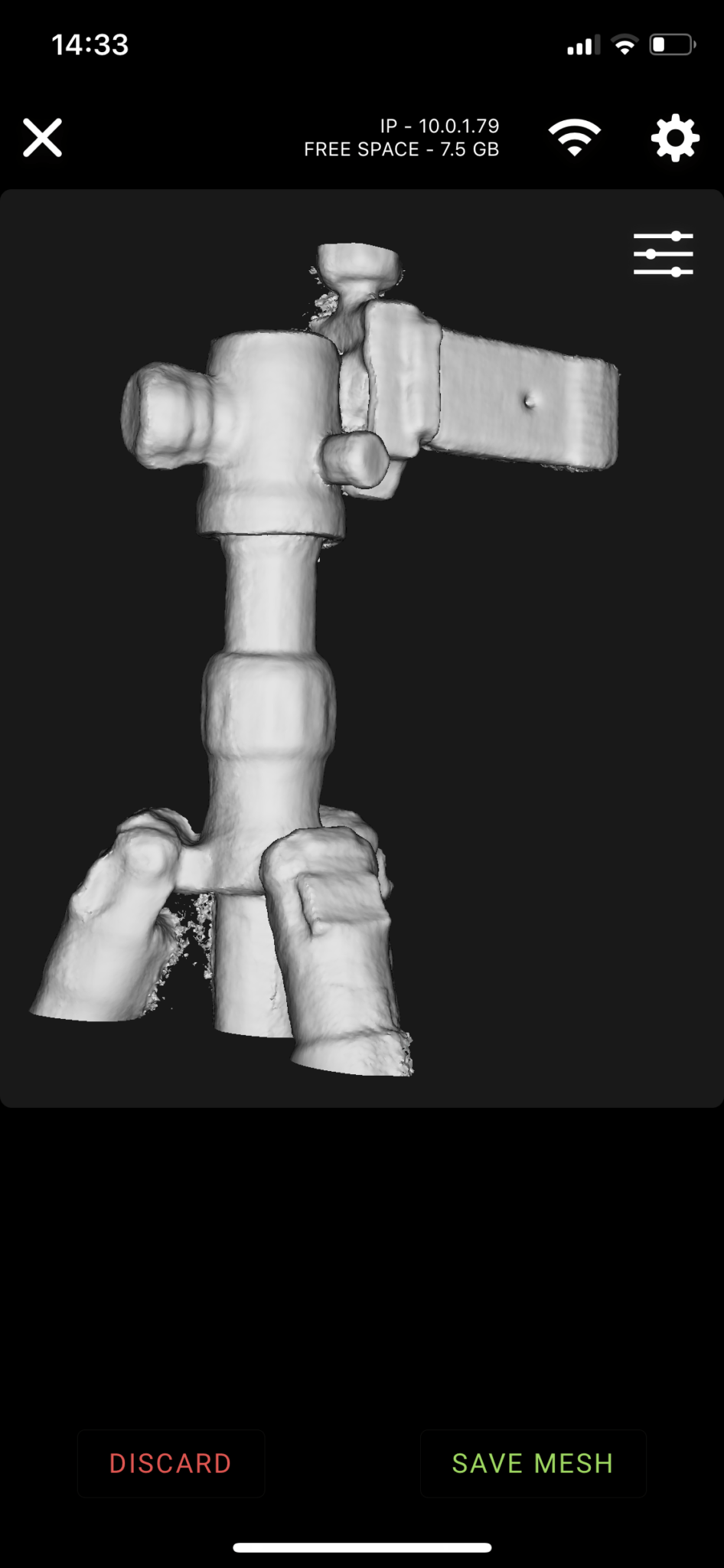

Carriere acknowledges that this hardware set-up is not perfect. He explains that the company is actively “dealing with the challenges of using a front-facing sensor for people who want to do object scanning,” which means they know they’re at Apple’s whim for now. However, they’ve developed a clever workaround that should be more than adequate in the short term: You connect your iPhone to another device using WiFi, and your iPhone’s screen will now be mirrored on that second device. Scan away.

Here it’s important to note that Scandy see itself as a developer of middleware–they’re the guys that work with the 3D hardware stacks available to make it easy for developers to build apps that use 3D scanning. For now, that means they’ll need to get creative, but as soon as Apple puts an outward-facing 3D sensor in their phones, Scandy will adapt to that, too. As soon as Android phones include a 3D sensor, Scandy will be available for Android.

A Scandy Pro scan.

Present and future uses for smartphone 3D scanning

Carriere reiterated often during our conversation that Scandy’s goal is to make 3D capture as easy as possible on a mobile phone, for both professional users and consumers. When the technology gets to that point, he says, people will start to do more of it, and the industry will take off.

One of the most obvious avenues is AR/VR. “If the augmented reality and virtual reality promise is ever going to be fulfilled,” Carriere explains, “users need to be able to create 3D content easier. If the only reason you’re putting on a headset is to consume something created by a digital artist, or to play a video game, I think the use cases for the technology are going to be somewhat limited.” On the other hand, he says, if you can make the 3D representations that will be viewed in 3D, and you can do that simply, it opens up a whole raft of use cases that we can’t predict.

In fact, Carriere and his co-founder Cole Wiley both believe that there are uses for easy 3D capture far beyond AR/VR. With Scandy’s middleware, they tell me, developers should be able to create apps for applications like scanning a head to size a helmet or a pair of glasses, scanning a foot to size orthotics, scanning a hand to make bespoke gloves or personized jewelry, scanning a room to test out furniture, and so on. Think of any way you’ve imagined using a 3D scanner on your phone, and Scandy thinks their middleware can help make that possible.

Volumetric 3D video

At one point during our conversation, Wiley shared his screen to show me a real-time, live-updating volumetric 3D scan of his face as captured by the iPhone’s TrueDepth sensor. “That’s a volumetric 3D video,” says Carriere. “It’s actually a mesh–that’s not rgbd, it’s a mesh that’s being created and live-streamed from a single point of view.”

The co-founders sent me to a web URL, which displayed the same video in real time, but with interactive features. I zoomed in and out, and moused around to rotate the scan. I compared the volumetric video to the video-chat image of Wiley’s face, and it checked out: I was seeing a live-streamed volumetric video. They told me that Scandy has been developing volumetric video for a few months, and that he believes it will help the average person understand the value and potential of 3D tech.

Not the most flattering angle, but you get the point.

Wiley explains that he can demo scanning an object and viewing it in an AR experience, or show someone how they can capture an object in millimeter-accurate 3D, but so far neither of those applications have been particularly effective at demonstrating why 3D technology is so important. However, when you show those same people that 3D tech on your phone will allow you capture 3D video and stream it “to everyone in the world simultaneously, on any device they could possibly have with them” people are going to find it much easier to understand, and to engage with. “There’s no extra challenge with this,” he says. “I launched the app, I sent you to a URL, and now we’re having a volumetric chat instead of just a video chat.”

For now, the volumetric video is still in development, but Wiley says that Scandy is about ready to launch it with some limited use cases soon, and plans on licensing the technology out to interested app developers. Carriere tells me that the technology could one day include the ability to capture a scan and send it to whomever you’re chatting with–say you’re at a furniture store and want to show your significant other what a table looks like, you could capture a quick scan and send it along.

Scandy’s hope is that this tech will reach a wide audience, and that they can see where it goes from there as people start to experiment with “the communication tool of the future.”

Breaking down the last of the bottlenecks

Scandy might know better than anyone what the future holds for 3D sensors in mobile devices. Why? They’ve worked with most of these sensors at one point or another.

Carriere and Wiley started the company in 2014 to develop middleware for the Occipital Structure sensor. Since it only “worked about as well as it could have for the technology stack that was out there at the time,” and the market was thin, Scandy moved on. They developed a workflow for 3D printing spherical images. They developed an app for the time of flight sensors in Google Tango devices (RIP), and did some dev work until Apple released the iPhone X with the TrueDepth sensor.

After this somewhat tumultuous run, Scandy feels “really, really good that the hardware problem is solved going forward,” and that flagship phones will all have 3D sensors in them. They say the only remaining bottleneck to further development is user education, a problem they hope to have solved with the volumetric 3D capture.

So now that the bottlenecks are disappearing, what comes next? “We have an idea of where it’s going to go,” Carriere says, “but it’s probably not going in that direction. We do know that it’s going to be exciting, and it’s going to be cool.”