In recent years, the AEC market has seen a glut of new technologies that capture, process, and visualize 3D data. With so many solutions hitting the market, how do you know whether the technologies are mature enough to use? How do you know what technologies are likely to come next?

Kelly Cone Point Cloud Headshot

To answer these questions, I called Kelly Cone. In his years at The Beck Group, Cone worked as BIM manager, innovations director, and director of virtual design and construction. Now, he’s the VP of product management at ClearEdge 3D. In other words, he knows knows AEC tools, he knows the technological challenges the industry is still facing, and he knows as much as anybody about how the hyped new technologies are really going to pan out.

We talked democratization of 3D capture, 3D data processing software, machine learning, accuracy, mixed-reality, and virtual reality. Dig in for a taste of what’s next.

1- Democratization of 3D Capture

Sean Higgins: Here’s a big-picture question–What’s the big takeaway from this past year for 3D technologies in AEC?

Kelly Cone: The thing that’s had the most impact is the democratization of capture. To me, that’s the obvious trend, whether it’s reducing prices of decent quality UAVs, increasing the ease of use of UAVs, or, I mean, the BLK360… That’s only moderately impactful!

Do you think the people who buy these technologies understand how much expertise it takes to use them properly?

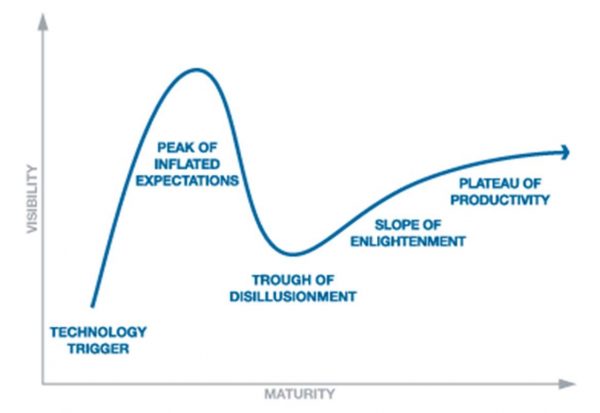

Well, it’s one of those things where I think we’re definitely going to go through a bit of a chasm—the chasm of despair. The capture right now is what has been successfully democratized, however, the registration and control aspect is still extremely challenging and technical. There are going to be people who have the ability to capture data, but do not have the easy-to-use tools, or the technical experience with the more complex tools to effectively control and manage the registration side.

It’s less of a problem on the photogrammetry side—that’s kind of a green field, there’s not an expectation.

The Gartner hype cycle

Are customers expecting this, or are they so excited about the possibility of capturing their own data that they’re totally unprepared for what’s in store?

I think they’re pretty unprepared. If you think about it from their perspective, they’ve been hiring service providers for this, so for them, up until this point how the sausage gets made has been unimportant. They just like how the sausage tastes–you know, it’s a good kielbasa right now. The problem is that, suddenly, they’re getting into the sausage-making business. Somebody has found a great way to package up the ingredients for them, but has forgotten to build a sausage grinder and casing machine.

I think the industry can see the chasm coming. I think service providers see it coming, and we’re trying to warn our customers, but I think the customers probably see that as protectionism rather than a genuine warning. They’re going to find out that it was actually a genuine warning.

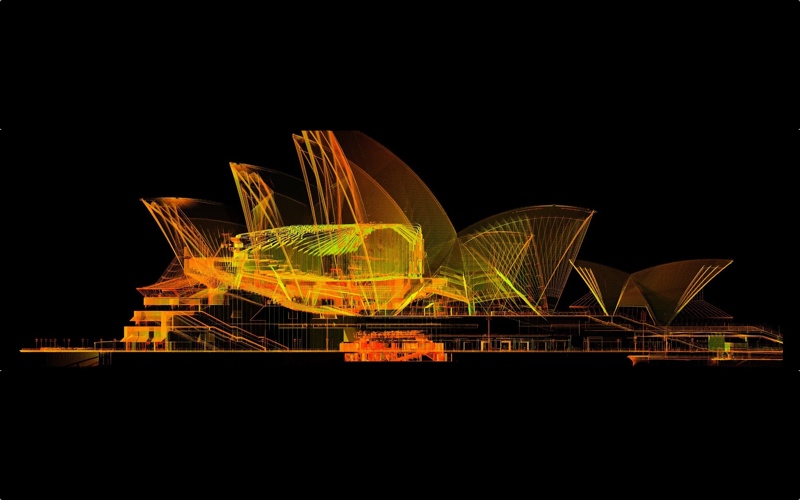

A scan of the Sydney Opera House, courtesy of The Scottish Ten

Do you think it will take a while for the market to work through this problem?

I suspect that there will be a lag time. However, there’s a lot of opportunity there. Someone can make a killing by packaging up a solution that deals with the right kind of control and registration workflow in a consumer-friendly feel and price range, but that also includes the technical back-end to deal with errors, mistakes, and inconsistencies, and fix registrations, and tie poorly overlapped scans together when cloud to cloud breaks.

Those two things are not necessarily un-mergable. There’s this kind of myth that ease of use is achieved by offering minimal functionality. They are not perfectly correlated things. There’s no doubt that the more capabilities you add, the more likely it is that the software will be complicated and harder to use. However, there are some really great tools out there that manage to merge a technically complex set of features and get a very good user experience. It’s just, none of those software companies exist in the reality capture space currently.

2- 3D Data Processing and Machine Learning

You hear a lot that the big problem is not capturing the data, it’s processing the data. Is that true?

I wouldn’t say that so much as that the processing is the first one, because it’s the next step in the workflow. Until that problem is overcome, the other problems will not be as apparent, because the volume of data generated will remain relatively low.

Once somebody figures out the registration and controls–how to make that easy to do, but with a high level of control–people will be generating so much data, because they’ll be able to, and do so much with it. Then the big problems are going to be, How do we store it? How do we view it? How do we understand it? I think they’re all similarly sized problems, it’s just that one is the first ceiling we’re going to bump our heads into.

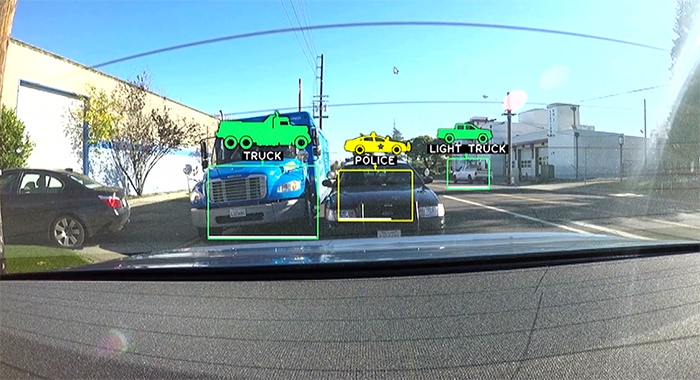

NVIDIA machine learning for self-driving cars. Source: NVIDIA

Do you see any overlap between this processing problem and machine learning? It’s a big topic in technology, but it seems to get weirdly limited play in the 3D tech industry.

We’ve been lucky to get a lot of really, really smart computer vision experts into the AEC reality capture space over the last 10 years. And, they’re focused on writing computer vision algorithms to solve our problems. This is one of those interesting things, experts in one field tend to focus on that one field. They’re great at it, and crossing over means starting from scratch and not being an expert anymore. So, machine learning is something we need other smart people to implement. Machine learning is relatively new though, and the AEC space does not pay the big bucks that Google or Tesla does, or any of these other big companies that have been sucking up the machine learning talent. It’s been very hard to get those people into our industry.

I think it’s absolutely a relevant solution for this industry. If machine learning can teach a car to drive, then yes, you should be able to teach a computer to register data. But I don’t know who’s going to be willing to pay somebody $250,000 straight out of college to come solve that problem for us.

A mockup of Google’s Tango handheld scanner.

Are there any other solutions that might help solve the same problems, but without costing a cool quarter million?

Fortunately, there are some other really good solutions. I see other people doing stuff in fits and starts—for instance, stuff with accelerometers. If you’ve got a consumer-grade IMU that you start slapping on scanners, the ability to provide a lot of information—Z+F does that with their 5010x. It has a big impact, but it’s brand specific. It would be a big deal if somebody comes out with a similar solution that sits on the scanner, or maybe finds a way to get that data out of the cell phones that we’re already carrying around. There are things that we can do to help re-define the problem, which will assist with the cloud-to-cloud registration stuff.

I’ve always been amazed that QR codes are not a part of the target recognition problem. There are so many ways for a piece of software using, again, computer vision, to understand where it is in space, using only information that we post on a little piece of paper. And yet, that has not been integrated into the workflow, but that’s the really powerful potential opportunity there to make registration simpler, more reliable. That doesn’t require machine learning—it would be cool, but I don’t think we’re going to get that as soon as we should.

Do you think AEC and other related spaces may get more advanced machine learning some day? I mean, if Google is developing the technology for Tango, and Apple is using machine learning to recognize specific sorts of objects in a point cloud, does that mean that it’s only a matter of time before it makes it over to AEC?

It will trickle down. For heaven’s sake, all of the photogrammetry stuff that’s becoming relevant for the construction vertical is a trickle-down from the survey space, which was a trickle down from the DoD stuff. Machine learning is already being employed to do object recognition in reality capture data for self-driving cars. It’s just all custom-coded into applications that have no relevance to us, that sit on dedicated hardware that are a very expensive piece of automotive equipment. Until that gets commercialized into a more generic piece of software, or there are just way too many machine learning graduates and people with that experience are no longer worth that much money. Eventually, we’ll pick it up.

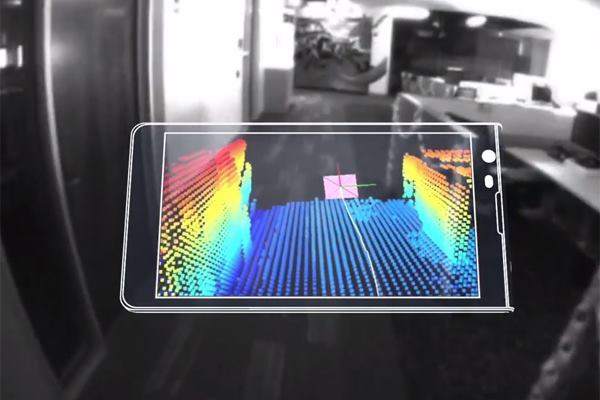

Redpoint’s indoor positioning system for AEC.

3- Improving Absolute Accuracy

You’ve mentioned that accuracy is an issue in AEC, too. Can you explain that?

One of the big gaps in AEC is correlating a relatively accurate position to an absolutely accurate position. That’s really the part where our current solutions break down. It’s kind of important for buildings.

How will bridging this gap, and figuring out how to offer better absolute accuracy, enable better technologies in AEC? For instance, would better absolute accuracy enable a wider use of mixed-reality technology on the job site?

I think that’s the big holdup. What everybody dreams about is the ability to walk out on a job site, hold something up, and for a little magical hard hat to tell you if you’ve got it in the right place or not. You put the screw in and it stays, and everyone is happy. But that doesn’t happen without a high level of absolute accuracy. Redpoint has done the best job of providing an accurate indoor positioning system that I’ve seen, but it still isn’t quite there for measurement or installation of work.

Accuracy is also the thing that’s holding back robotics in the construction industry, and frankly that’s probably the far more game-changing application of absolute positioning within a building. Robotics another big area of innovation, certainly.

4- VR and AR in AEC

Before I let you go, could you talk a little bit about where you see this mixed, augmented, virtual reality technology going? It seems like half of the press releases I get these days are about those technologies and their use in AEC. What are your general thoughts about it? It is overhyped? Is it an interesting thing that’s almost there? What’s your take?

I think there are certain areas where it’s absolutely there. At Beck, they had an HTC Vive, and before that they had an Oculus Rift–that’s all VR. I think virtual reality has very much arrived, and not so much as a client tool or a sales tool, though I think that will come. It’s actually most powerful as a design tool.

Martin Brothers using AR to build a bathroom pod with no blueprints.

I can’t remember who told this to me, but way back in college, somebody explained that there was a reason why there have always been only one or two or three great, amazing, incredible architects alive at any given point in time. Sure, as population has increased, that number has increased, too. But their argument was that the ability to synthesize 2D drawings, sketches, and other abstract information, and build a very accurate vision of what a 3D space is going to feel and look like, is actually an extremely uncommon skill. Most okay architecture in the world happens because the people designing it are not actually that great at synthesizing the information, which is a very hard thing to do.

What’s fascinating about VR is that you’ve basically democratized that skill set. Now anybody can build a 3D model, slap on a VR headset, occupy that space, and understand those spatial relationships in a way that’s extremely high fidelity compared to what it will really feel like. If you’re good at design and you have those tools, you should be able to make great spaces. That statement was not ever true prior to VR. You might be a great designer, but your ability to correlate that into a great space was just one in a million. I think that’s pretty fascinating.

How about mixed reality?

Mixed reality, probably the first application that’s super useful is going to be construction verification, within this industry. If you’re not trying to install work, but instead figure out if the work you tried to install was correct, the problems are much easier to solve, and the need for accuracy is actually lower, from a mixed-reality standpoint, because you can orient yourself to things that are existing in the real world, as opposed to trying to create something using mixed reality. I think we already see that use case, and I hope to leverage that in my new role working on construction QA software. Installation using augmented reality is the ultimate goal, but we’ve got a few years to wait before that is reality.